During the lockdown I’ve been “drinking from the firehose” trying to learn all of the Tanzu products. I’ve even been lucky enough to get custom coaching from experts like Sean Noyes - Tanzu Observability, and Jason Marchesano - NSXT. Some products are easier to learn than others. Tanzu Observability is a SaaS product, so no homelab required. NSX-T on the other hand, 3.0 specifically, takes newer hardware and with VSphere 7, everyone is learning the new tricks and traps.

Well tonight, I ran into blockers on both clusters “big” and “little” for different reasons.

The “big” blocker is that the NICs don’t support jumbo frames, and that is a requirement for NSX-T. The “little” blocker is that the lifecycle management (LCM) setup, new with VSphere 7, can’t be used with the new NSX-T manager. On top of that, I couldn’t figure out how to disable the LCM causing the issue.

I spent about five hours of the day turning knobs in my homelab. I learned. I am upgraded for sure. But I didn’t accomplish any of the goals I had set.

A little afterhours Slack, ended up resulting in serious FOMO. Hat-tip to Craig Erdmann for inspiring me to NOT call it a night.

I switched gears to TKG.

Plan B

What follows is my late night notes, after a long day with little progress. They are really notes-to-self, so I apologize for nothing.

-

Download TKG CLI for both Mac and Linux because I planned on doing everything twice

-

Download the 2 OVA files

- OVA for node VMs: photon-3-v1.17.3_vmware.2.ova

- OVA for load balancer VMs: photon-3-capv-haproxy-v0.6.3_vmware.1.ova

-

Install CLI

-

$ tkg version Client: Version: v1.0.0 Git commit: 60f6fd5f40101d6b78e95a33334498ecca86176e -

$ tkg --help VMware Tanzu Kubernetes Grid Consistently deploy and operate upstream Kubernetes across a variety of infrastructure providers. Documentation: https://docs.vmware.com/en/VMware-Tanzu-Kubernetes-Grid/index.html Usage: tkg [command] Available Commands: add Add an existing resource to the current configuration config Generate Cluster API provider configuration and cluster plans for creating Tanzu Kubernetes clusters create Create a Tanzu Kubernetes cluster delete Delete a management cluster or Tanzu Kubernetes cluster get Get Tanzu Kubernetes Grid resource(s) help Help about any command init Create a Tanzu Kubernetes Grid management cluster scale Scale a Tanzu Kubernetes cluster set Configure some aspect of the Tanzu Kubernetes Grid CLI version Display the version of the Tanzu Kubernetes Grid CLI Flags: --config string Path to the the TKG config file (default is $HOME/.tkg/config.yaml) -h, --help help for tkg --kubeconfig string Optional, The kubeconfig file containing the management cluster's context --log_file string If non-empty, use this log file -q, --quiet Quiet (no output) -v, --v Level number for the log level verbosity Use "tkg [command] --help" for more information about a command.

-

-

Install both OVAs and convert both to templates

-

Make sure that Docker is running on Mac

$ tkg init --ui Logs of the command execution can also be found at: /var/folders/t8/rn7bbfjs559_9dm5gx8tzb640000gn/T/tkg-20200421T234346350986334.log Validating the pre-requisites... Error: kubectl prerequisites validation failed: kubectl client version v1.15.5 is less than minimum supported kubectl client version 1.16.0 Detailed log about the failure can be found at: /var/folders/t8/rn7bbfjs559_9dm5gx8tzb640000gn/T/tkg-20200421T234346350986334.log -

Quick fix to upgrade kubectl on the mac…maybe not

$ brew upgrade kubectl Updating Homebrew... Ignoring path homebrew-cask/ To restore the stashed changes to /usr/local/Homebrew/Library/Taps/homebrew/homebrew-cask run: 'cd /usr/local/Homebrew/Library/Taps/homebrew/homebrew-cask && git stash pop' ==> Auto-updated Homebrew! Updated 5 taps (homebrew/cask-versions, homebrew/core, homebrew/cask, minio/stable and snyk/tap). ==> Updated Formulae minio/stable/minio ✔ allure dolt jenkins lft lxc sile vttest openssl@1.1 ✔ conan gitlab-runner juju libosmium phoronix-test-suite texlab snyk/tap/snyk ✔ contentful-cli goreleaser lazygit libxmlsec1 prometheus vala ==> Updated Casks 4k-video-downloader fantastical glip krisp postbox stand abstract focuswriter glyphs latexit postico terminus airdisplay fontbase google-chrome macpilot powershell-preview trilium-notes brave-browser-dev fsnotes google-earth-pro mailbutler praat ultimaker-cura chromium futuniuniu gpower melodics qownnotes universal-media-server clover-configurator ganache harvest microsoft-edge-beta scaleft visual-studio-code-insiders cryptomator gearboy hubstaff opera-beta screen zappy dcv-viewer gearsystem iglance opera-developer second-life-viewer zoomus docker-edge geogebra insync osu-development session drama github kapow parallels sound-control ==> Deleted Casks safaricookiecutter safarisort ==> Upgrading 1 outdated package: kubectl 1.18.1 -> 1.18.2 ==> Upgrading kubectl 1.18.1 -> 1.18.2 ==> Downloading https://homebrew.bintray.com/bottles/kubernetes-cli-1.18.2.catalina.bottle.tar.gz ==> Downloading from https://akamai.bintray.com/5f/5f7df7b8226c75aff03a754057cef41150260e3a968d6fc4282738b01b1d8911?__gda__=exp=1587531453~hmac=333382f0ebbe1c732da937d9e888ecc5b2cc056 ######################################################################## 100.0% ==> Pouring kubernetes-cli-1.18.2.catalina.bottle.tar.gz Error: The `brew link` step did not complete successfully The formula built, but is not symlinked into /usr/local Could not symlink bin/kubectl Target /usr/local/bin/kubectl already exists. You may want to remove it: rm '/usr/local/bin/kubectl' To force the link and overwrite all conflicting files: brew link --overwrite kubernetes-cli To list all files that would be deleted: brew link --overwrite --dry-run kubernetes-cli Possible conflicting files are: /usr/local/bin/kubectl ==> Caveats Bash completion has been installed to: /usr/local/etc/bash_completion.d zsh completions have been installed to: /usr/local/share/zsh/site-functions ==> Summary � /usr/local/Cellar/kubernetes-cli/1.18.2: 232 files, 49.1MB Removing: /usr/local/Cellar/kubernetes-cli/1.18.1... (232 files, 49.1MB) Removing: /Users/dcarter/Library/Caches/Homebrew/kubernetes-cli--1.18.1.catalina.bottle.tar.gz... (13.3MB) ==> Checking for dependents of upgraded formulae... ==> No dependents found!I have a kubectl that isn’t managed by brew! What was I thinking? Easy fix. YOLO.

$ rm /usr/local/bin/kubectl $ brew upgrade kubectl Updating Homebrew... Warning: kubectl 1.18.2 already installed $ brew link --overwrite kubernetes-cli Linking /usr/local/Cellar/kubernetes-cli/1.18.2... 228 symlinks created -

Try to init this again!

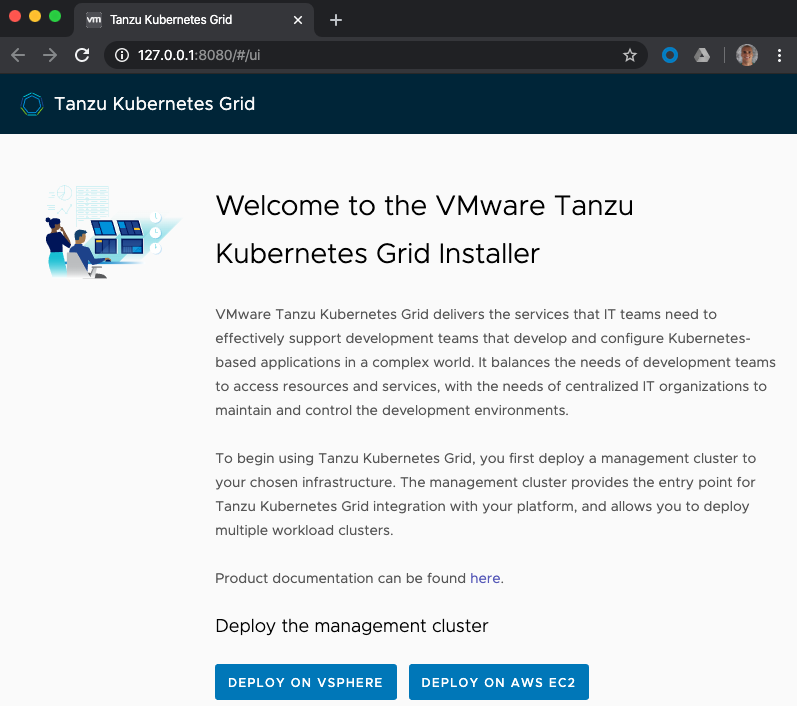

$ tkg init --ui Logs of the command execution can also be found at: /var/folders/t8/rn7bbfjs559_9dm5gx8tzb640000gn/T/tkg-20200421T235126300226895.log Validating the pre-requisites... Serving kickstart UI at http://127.0.0.1:8080Browser pops up with UI! Now I feel like I’m doing something!

It’s giving me the option of deploying to VSphere or AWS. I would probably have success by choosing AWS, but that feels like the easy path. I am trying to learn here, so I’ll keep deploying to my “big” cluster on VSphere.

After entering the VCenter Server info to validate VSphere, I’m shown this message:

You are about to provision a Kubernetes cluster on a vSphere 7.0.0 cluster that has not been optimized for Kubernetes. For the best experience of Kubernetes on vSphere, contact your vSphere administrator and ask them to enable the vSphere with Kubernetes feature.

Do you want to proceed without enabling the vSphere with Kubernetes feature?

Yep! “Proceed”

Development or Production?

- I choose “Production” control plane, which means 3 VMs, and I choose “small” instance types.

- I give the management cluster a name “plan-b-m-g-m-t” because its late, I’m tired, and it rhymes.

- I select the only api server load balancer available from the list.

Resources?

- Resource Pool: There is a “big/Resources” option which sounds good.

- VM Folder: There is “/vm” option or “/vm/discovered vms” so I’ll choose “/vm”

- Datastore: I choose the vsan option that is in the “big” cluster.

Kubernetes Network Settings:

- Network Name: big-tkg-pg

- Cluster Services CIDR: default: 100.64.0.0/13

- Cluster POD CIDR: default: 100.96.0.0/11

Images

- OS Image with Kubernetes v1.17.3+vmware.2 There is only 1 option in my list right now, lets give it a try!

There is a “review” section to check my work.

It all looks good.

At this point, it is after midnight.

I just want to get something working.

I want to go to bed having accomplished something.

Click the button!

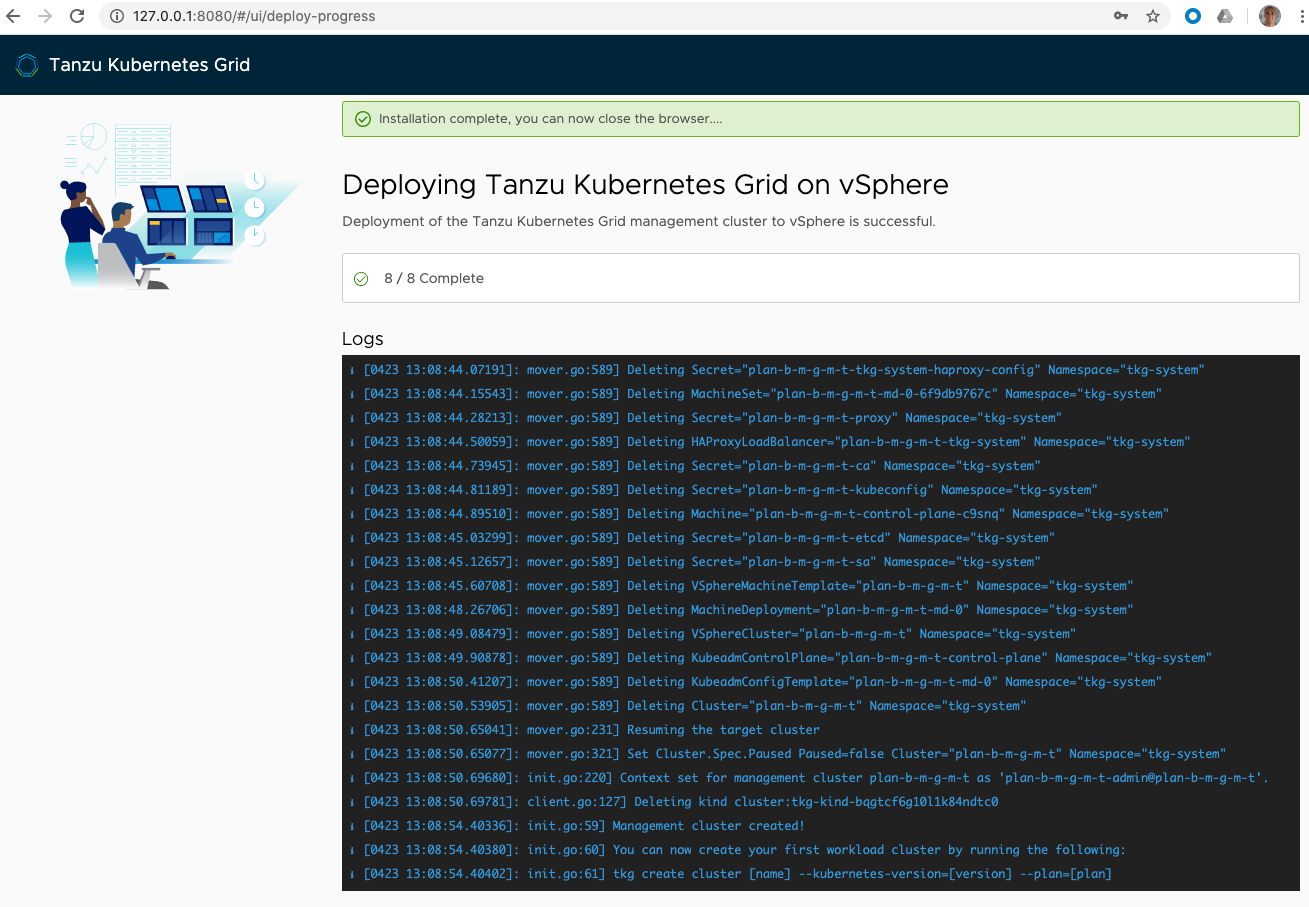

I made it about 10 minutes into the install. 4/8 Complete. I just couldn’t sit and watch log lines anymore. When I checked back in the morning, that totally didn’t work for me!

Plan C?

Frustrated. I take the AWS path. As I expected, that worked first try, I’ll share that information later. I basically spent the rest of the day using TKG on AWS.

Back to Plan B

Having stepped away from the problem, and having success on AWS, I took time to read the documentation. I realized that I needed to have DHCP setup for the Cluster Services CIDR. I had DHCP setup, but not for the subnet that I wanted to use. I deployed a pfsense appliance to the cluster to provide DHCP for this subnet and on this specific portgroup.

Step through the above steps again, and everything works!

It took time, but reading the docs helped me find success!